Boomi Companion: Equipping the agents your teams already use

Boomi Companion is our portfolio of open source Agent Skills and plugins — designed to give any AI agent the expertise to do real Boomi work.

Agent Skills are an open standard, which means Boomi Companion slots naturally into coding agents like Claude Code, OpenAI Codex, and Google Antigravity, as well as into chat experiences like Claude Cowork. Wherever your team's agents are doing real work, Boomi Companion can come along.

This first release focuses on hands-on building, with skills for implementing integration processes, EDI (Electronic Data Interchange), and Event Streams. It can also pull from existing recipes and templates on marketplace.boomi.com. The rest of this blog walks through what's included, why we built it the way we did, and what it looks like in practice.

The human experience

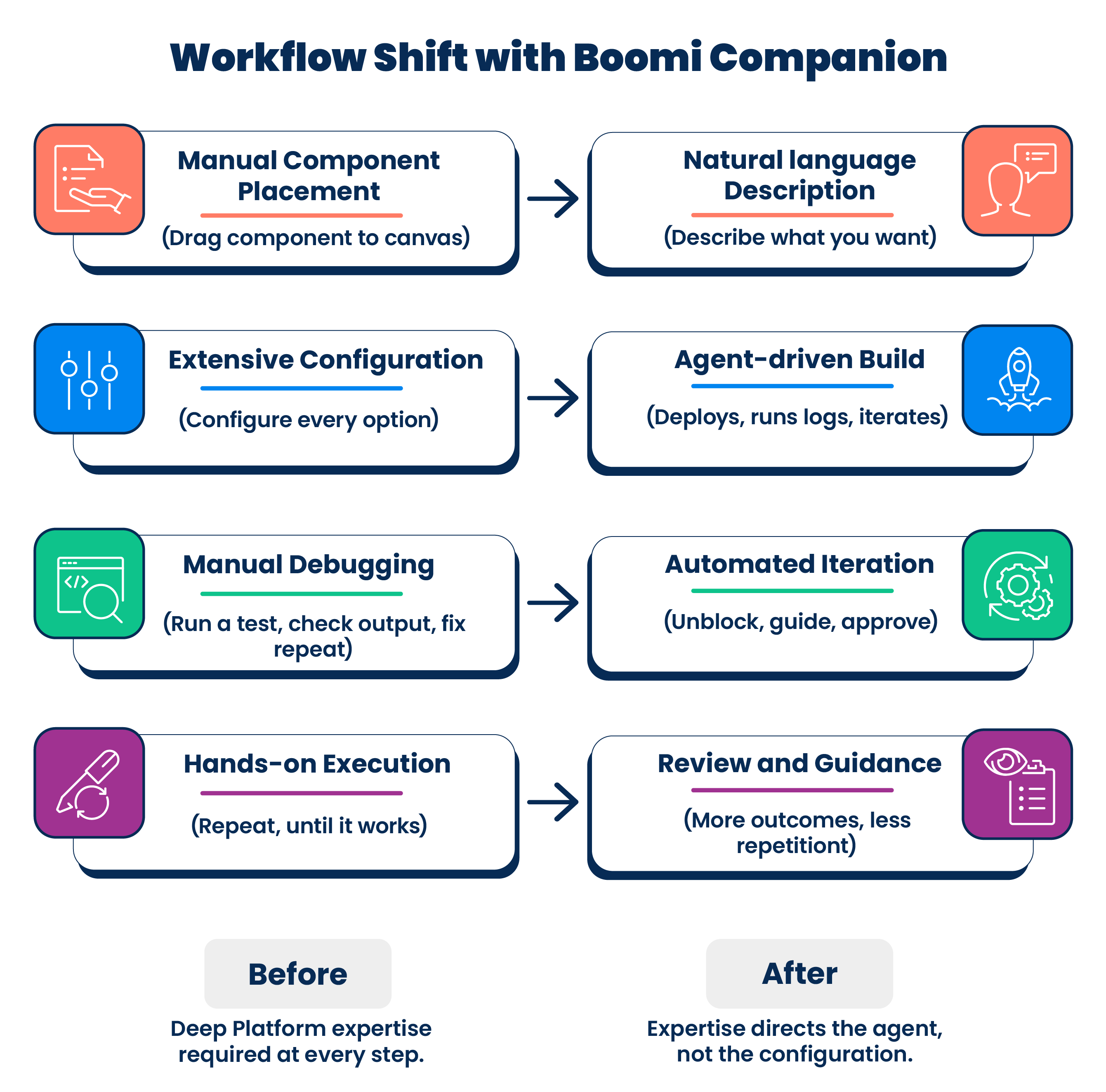

Before Boomi Companion, a Boomi developer's Tuesday morning looked like this: drag a component onto the canvas, configure it, run a test, check the output, configure the next one, run a test, check the output. The work was niche and human-centric — you had to know every pane and option in the platform UI, and you had to carry a deep mental model of how the Boomi Platform thinks. You had to know how documents flow, split, combine, and route through the branches of a process, how components relate to each other, and how profiles grant access to specific pieces of data. Experience was necessary, and the hardest-won parts were configuration mechanics rather than integration design.

With Boomi Companion, that focus moves. Developers describe the integration to an agent in natural language — sometimes a quick conversation, sometimes a deep planning session, sometimes a fully-formed implementation spec — and the agent goes off to work. The developer's day shifts to direction, unblocking the agent when it gets stuck, and reviewing what it produced. The skill ceiling didn't drop. The floor moved.

The following examples show what that shift looks like in practice from our own team:

-

Building an EDI profile and map (Manual vs. Boomi Companion): One of our EDI specialists, with three decades in the field, recently timed themselves as they manually built a new EDI profile and map. It took one hour and twenty minutes. When the same task was handed to Boomi Companion, it built four EDI profiles and maps in twenty minutes. The headline number is roughly a 16 times lift on that workload, but the more interesting shift is qualitative: A morning's worth of one task became "queue up a few Claudes and go work on something else." That isn't faster work. It's different work.

-

Building a personal knowledge store (Manual vs. Boomi Companion): At the other end of the experience curve, one of our enablement specialists recently built a "second brain" — a personal knowledge store for capturing notes, decisions, and references in a structured way. They set up the Boomi Data Hub layer. Boomi Companion built the integration processes that move data in and out. The result was a working app built exactly as imagined and ready to use. The integration work had been the unbridgeable gap. Now it isn't.

When Boomi Companion builds, it's drawing on Boomi's existing Platform assets — components, connectors, runtimes, observability, and security. The skill's job is to teach the agent how to use that Platform, a far smaller problem than teaching it to build, run, manage, and govern enterprise integrations from scratch. When the agent is done, you review what it produced using the same Platform tools you already know: the design canvas, execution traces, and run-level visibility.

With that substrate underneath, here's what ships in the first release of Boomi Companion.

What's inside

The initial release of Boomi Companion ships two skills, available either as standalone agent-agnostic skills or as Claude plugins:

- boomi-integration: The boomi-integration skill is the core of Boomi Companion v1 and covers the day-to-day work of designing, building, testing, deploying, and diagnosing across integration processes, EDI/B2B, and Event Streams.

- boomi-marketplace: The boomi-marketplace skill is an optional add-on. It gives agents the ability to search a library of reference templates on marketplace.boomi.com, which they can import as a starting point for a project or as informative grounding.

Boomi Companion sessions tend to take three shapes:

1. Build something new

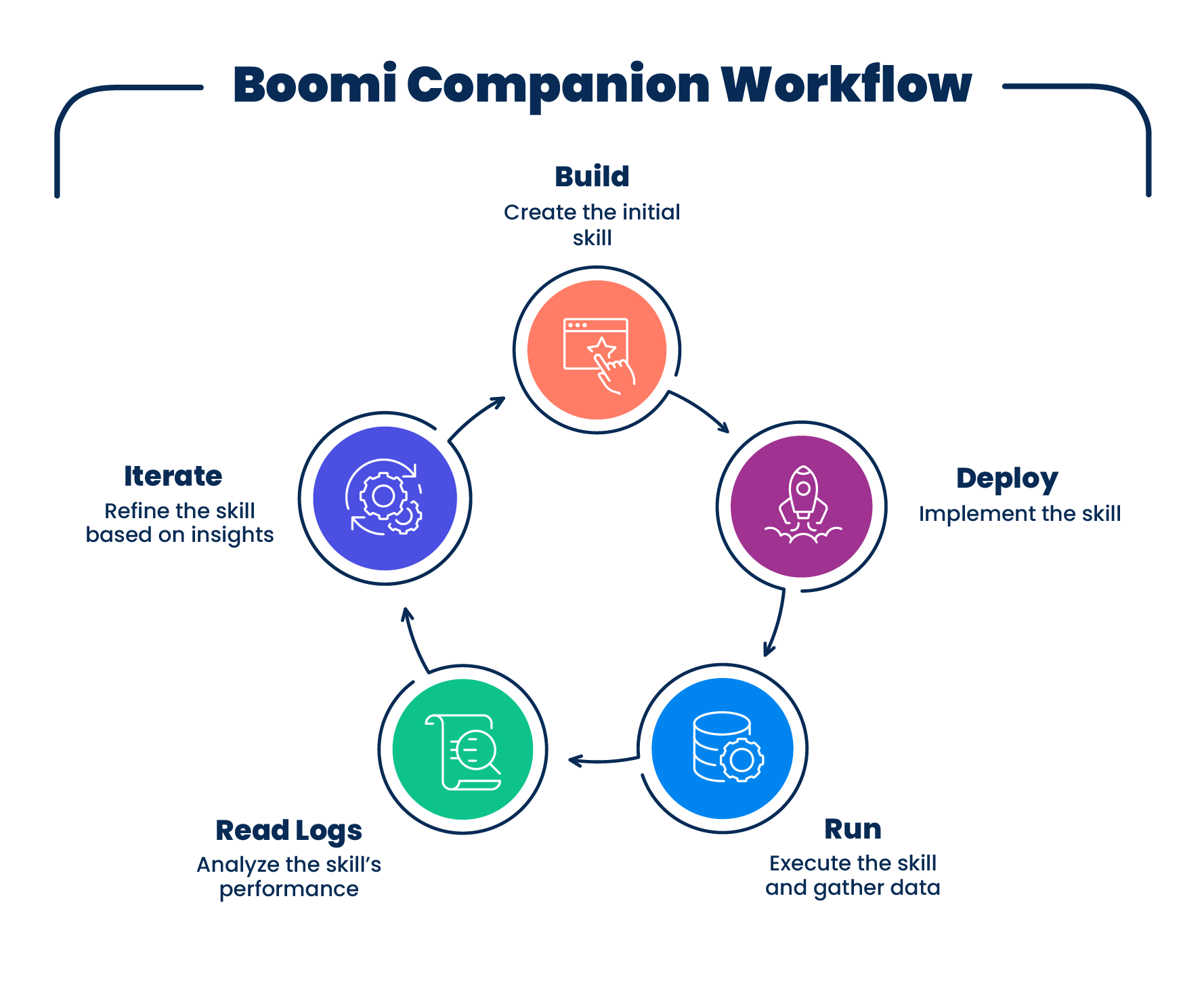

Boomi Companion can take a project from a blank canvas to a working integration. Most sessions start with a conversation — a few sentences of intent, a question, a sketch of what you want. Some sessions stay in conversation mode for a while, discussing the architecture, debating trade-offs, and collaboratively shaping a plan before any components are built. Other times, you will hand it a fully formed spec or a link to API documentation. Your agent can meet you in whatever conversational mode you prefer — from a few sentences of intent to a deep planning conversation to a full spec handoff. From there, it creates a project-specific folder, builds components like profiles and maps, configures connections, and assembles it all into a process. It packages and deploys to your configured runtime, runs test executions, and reads the logs — not just to catch failures, but because running it is how it learns what the process is actually doing, the same way a human Boomi developer would. It then iterates as autonomously as you want: refining, extending, fixing. The developer's role is to direct, unblock, and review.

2. Modify what's already there

Point Boomi Companion at existing assets in your Boomi account, and it pulls the component (along with its dependencies) into the local workspace. You can paste a link, or the agent can search by name in your account. From there, it can refactor, extend, analyze, or discuss. This pull-from-platform pattern is also how the skill handles GUI-bound configurations, such as OAuth flows or branded connector imports. Set them up in the Boomi UI, and Boomi Companion takes it from there.

3. Diagnose and fix

When something's broken, Boomi Companion can work backward from the failure. Give it a failing execution ID, and it pulls the logs, downloads the process, identifies what went wrong, and either walks you through it or starts on a fix. This is the entry point for reactive work — fixing what's broken at runtime rather than building or extending by design.

Where the edges are

Boomi Companion is strong with many of our technology connectors, plus maps, profiles, message steps, branches, etc. — the day-to-day shape of most integration work. Branded SaaS connectors (Salesforce, NetSuite, and similar) are partially in scope: Setup involves GUI flows that aren't API-exposed, but Boomi Companion picks up the build from there once the connection exists. When Boomi Companion encounters something it doesn't know, you can provide context or have it search marketplace.boomi.com for samples.

The initial release covers Integration, EDI, Event Streams, and Marketplace. Future releases will expand into other Platform services, such as Flow, Data Hub, API Management, and Agentstudio, as well as operational concerns, including architecture, custom connector development, and runtime management.

A note on distribution

Most developers install Boomi Companion via the auto-updating plugin marketplace in Claude Code. This is the simplest path to staying current with releases. However, the core skills follow the Agent Skills open standard, and we publish both the plugin and standalone skill form factors. Teams using OpenAI Codex, Google's coding agents, or other harnesses can clone the skills and run them directly. The standalone skill format also works nicely if you want to fork and customize. Everything is open source under the BSD-2-Clause.

How we built it

The integration skill ships with close to 25,000 lines of experimentally validated, human-corroborated reference content. Its quality comes from a handful of principles we stayed disciplined on. We treat every line as future tech debt.

The over-generalization problem

Most of what makes Boomi Companion's integration skill effective isn't what we put into it. It's what we kept out.

When you give an AI model a few samples of how something works, it wants to confidently extrapolate to a complete picture of how everything works. Occasionally, that extrapolation is right. In our experience, often it isn't. This has been the most fundamental observation through the process of building this skill – we reject most of the content the model wants to include in the skill, and edit much of the rest.

Let's look at the following two failure patterns:

-

Induction from incomplete samples: We were documenting a Boomi component in which all the examples we fetched used two specific values for a given field. The agent wanted to write that those two values were the only options the field accepted. They weren't — they were just the only ones we'd seen. Treating "the data I have" as "the data that exists" is an induction failure, and it's how confident agents and absentminded skill builders end up writing reference material that's silently wrong.

-

Analogy from out-of-domain priors: A different shape of the same problem came up while documenting Boomi's Branch step. Branches in most programming languages run in parallel, and the agent's general training pulled hard in that direction. In Boomi, they don't — a branch component runs the first path to completion before starting the second. The agent wanted to write the parallel-execution claim because parallel-execution is the prior; the Platform's actual behavior directly conflicted with the agent's intended assertion.

Both failure modes are pervasive. Both are the kind of confidently wrong content that an AI-generated skill will be full of, and that’s why good process and human expertise are (still) required for skill-building projects of any significance.

As part of our lifecycle workflow, we use a runtime toggle validation methodology: Claims don't enter the skill until we can produce both the success and failure modes by changing only the variable in question. If we want to assert that a field requires Y, our fleet of experimenter Claudes needs to demonstrate the success path with Y present and the failure path with Y absent, while holding everything else constant. The toggle is the unit of truth. Without it, the fact is not sturdy enough to be included in our skill.

The validation methodology leans on agents — a fleet of experimenter Claudes running the toggles. The irreducibly human part is pattern recognition: catching over-extrapolation, invented premises, code bloat, subtle misreadings of Platform behavior — a kind of spidey sense for when the agent is confidently bullshitting in whatever form it takes that day.

The cost of that discipline is real. The skill content takes significantly more time to produce than the AI-drafted version would. The benefit is also real: The agent operating against this skill isn't presented with subtle inaccuracies, so it isn't producing ever-so-broken integrations that neither the agent nor the developer will notice until production.

The architecture predates the standard

Boomi Companion's earliest version, in autumn 2025, was a single repository where the agent reviewed platform knowledge, ran tools, and built project content all in one workspace. We had stumbled into a design akin to what's now called progressive disclosure, organizing knowledge so the agent only loaded what it needed, when it needed it. We didn't have a name for it. We just noticed that it was effective.

When Anthropic published the Agent Skills standard, the fit was immediate and intuitive. Skills cleanly separate the knowledge layer (the skill) from the work layer (the project workspace), allowing us to apply a single skill across many projects without dragging project state along. Slotting in wasn't a rewrite; it was a recognition.

Lean tooling: Python, then Bash

In all forms of Boomi Companion (so far) we’ve wrapped the tools directly into the skill, rather than relying on specific MCP servers or asking the agent to improvise its own platform API calls.

The earliest tooling was Python. Version resolution and virtual environments kept causing friction. Eventually, we asked whether we needed any of it. The answer was no. We rewrote everything in Bash and shed all the Python dependencies.

The same instinct shaped how the tools themselves are designed. When we built “component-pull,” the model's instinct was to write a script that could parse every possible Boomi dependency structure up front. We resisted that. Instead, the CLI tool fetches one component at a time. The skill instructs the agent to read what it pulls, decide which dependencies are present, and pull each of those — cascading down the tree, one tool call per node. In all projects that followed, we opted for less code and more model judgment.

In tandem with the Bash migration, we adopted a cleaner posture on credentials, which fall into two types worth distinguishing:

-

Platform API credentials: These are how Boomi Companion talks to the Boomi Platform. The scripts source auth from the project's

.envfile, so the agent doesn't need to read or populate them directly. To be clear-eyed about the threat model: This isn't a hard boundary — a sufficiently creative agent can find anything on its host machine. Use service credentials and limit access as appropriate. -

Connector credentials: These are how the integrations themselves authenticate to third-party systems — Salesforce, NetSuite, your SFTP server, etc. The best practice is to configure them in the Boomi Platform GUI and give the agent a link to the existing connection, so it only ever sees an encrypted reference. That said, we're not dictating your workflow — if you're comfortable handing the agent a credential directly, it understands how to build with it.

The agent tests its own work

Boomi Companion can deploy what it builds, run it on your test environment, read the execution logs, and use what it observes to iterate. Without that loop, we estimate that the skill would be about one-quarter as effective. The agent doesn't need to be told that its first attempt was wrong — it sees the output, compares it to what was expected, and corrects course. It’s the same loop a human Boomi developer runs through: sometimes you don’t know what you need to build until you see an output or an error.

Giving our agents the ability to test and iterate is probably the single highest-leverage design decision in Boomi Companion. Anything an agent can verify on its own is something the human doesn't need to verify for it.

The eval harness

Through the migration from monolith to skill, and the months that followed, we ran an eval harness focused on a single question: How often does the agent build a Boomi component correctly on the first try? First-shot success is the metric that surfaces skill quality — if the agent troubleshoots through a problem and eventually gets the right answer, we don't learn anything about the skill's gaps from the final successful result. We only learn what the skill is missing when we see where the agent fails on the first attempt and has to recover. We internally set up Claude Code hooks to track every component create-or-update call, measured first-shot success against a fixed set of representative tasks, and then used the result to know whether each round of skill changes was helping or quietly making things worse.

That eval is saturated now. The agent comfortably hits 100% first-shot success on the original eval set, which means it's no longer telling us whether the next version of the skill is incrementally better. Some of that lift is the skill maturing. Some of it is the underlying models maturing. We started on Sonnet 4.0 and are now on Sonnet 4.6 and Opus 4.7. We're building the next-generation eval as a CLI debug mode — so that it will work with any agent, not just Claude Code — plus a broader "obstacle course" of harder tasks. That may be a future post.

We invite the progress

Boomi Companion as a whole, and these initial implementation-focused skills specifically, will evolve. Parts of the current skill will sunset as models improve, as Platform-native search gets better, and as UI computer use becomes more widespread. The skills are an evolving snapshot of what works, not a permanent fixture.

The thesis

All tested AI agents, including Claude Code with the strongest Anthropic models, have historically struggled to build a "Hello World" Boomi process via the component API using only their training data and publicly available information, including web search results. There isn't much information about the underlying Boomi Platform components out there to learn from, and the Platform API is somewhat opaque. We've seen agents recover by crawling their connected accounts for samples, which is creative problem-solving but not indicative of innate knowledge of how the platform works. Strip the references, point them at an empty account, and they fail.

That floor is the empirical proof of what the underlying models actually know about Boomi: not enough to build anything. The skill content is what bridges that floor to productive Boomi projects and outcomes.

We expect that floor to rise. As models get smarter, as they learn from public Boomi Companion repos and from the corpus of integration work that gets done with them, the skill will become less load-bearing. Some of what we've written will be absorbed into improving base models; some will be replaced by tooling that didn't exist when we started; some we'll replace ourselves with better approaches. That's The Bitter Lesson, and we hold it close.

Try it out

Boomi Companion is live today. The integration skill turns natural language prompts into deployed Boomi processes. The marketplace skill grounds your agent in a library of expert-built reference patterns. Both are open source, available as Claude plugins or as standalone agent-agnostic skills.

More skills and plugins are coming, shaped by what we hear from the community about how they want to use this.

Ready to see it in action? Get started today. Install the plugin, point it at a Boomi account, and ask it to build something.

We'd love to hear what you build with it, what works, and what doesn't. If you're on the team that created Agent Skills, we're fans — drop us a line. Feedback to developer-offerings@boomi.com.

Acknowledgments

Boomi Companion v1 is the work of Ben DeBoer, Shasta Hayes, Chris Cappetta, and Claude. Thanks to Boomi colleagues, early-adopter customers, and partners for input throughout, and to Anthropic's Agent Skills team for the standard.